“It is only by ignoring the torrent of low-quality information that people can focus on applying critical search skills to the remaining now-manageable pool of potentially relevant information.”

By JOSHUA BENTON

Eyeballs. That’s what everyone on the internet seems to want — eyeballs.

To be clear, it’s not actual eyeballs, in the aqueous humor sense, that they’re looking for. It’s getting your eyeballs pointed at whatever content they produce — their game, their app, their news story, whatever — and however many ad units they can squeeze into your field of view. Your attention is literally up for auction hundreds or thousands of times a day — your asset, constantly sold by one group of third parties to another group of third parties.

The result is information overabundance. There is literally, as Ann Blair once put it, too much to know. And what share of that overabundance hits your corneas is largely determined by others — what your friends share, what platforms’ algorithms slot into view.

Given all that madness, the need for critical thinking is obvious. But so is the need for critical ignorance — the skill, tuned over time, of knowing what not to spend your attention currency on. It’s great to be able to find the needle in the haystack — but it’s also important to limit the time spent in hay triage along the way.

That’s the argument advanced in this new paper just published in Current Directions in Psychological Science. It’s titled “Critical Ignoring as a Core Competence for Digital Citizens,” and it’s by Anastasia Kozyreva, Sam Wineburg (Stanford), Stephan Lewandowsky (University of Bristol), and Ralph Hertwig. (I momentarily skipped over Kozyreva and Hertwig’s institutional affiliation only because it is sufficiently epic-sounding to deserve its own sentence: the Center for Adaptive Rationality at the Max Planck Institute for Human Development in Berlin.)1

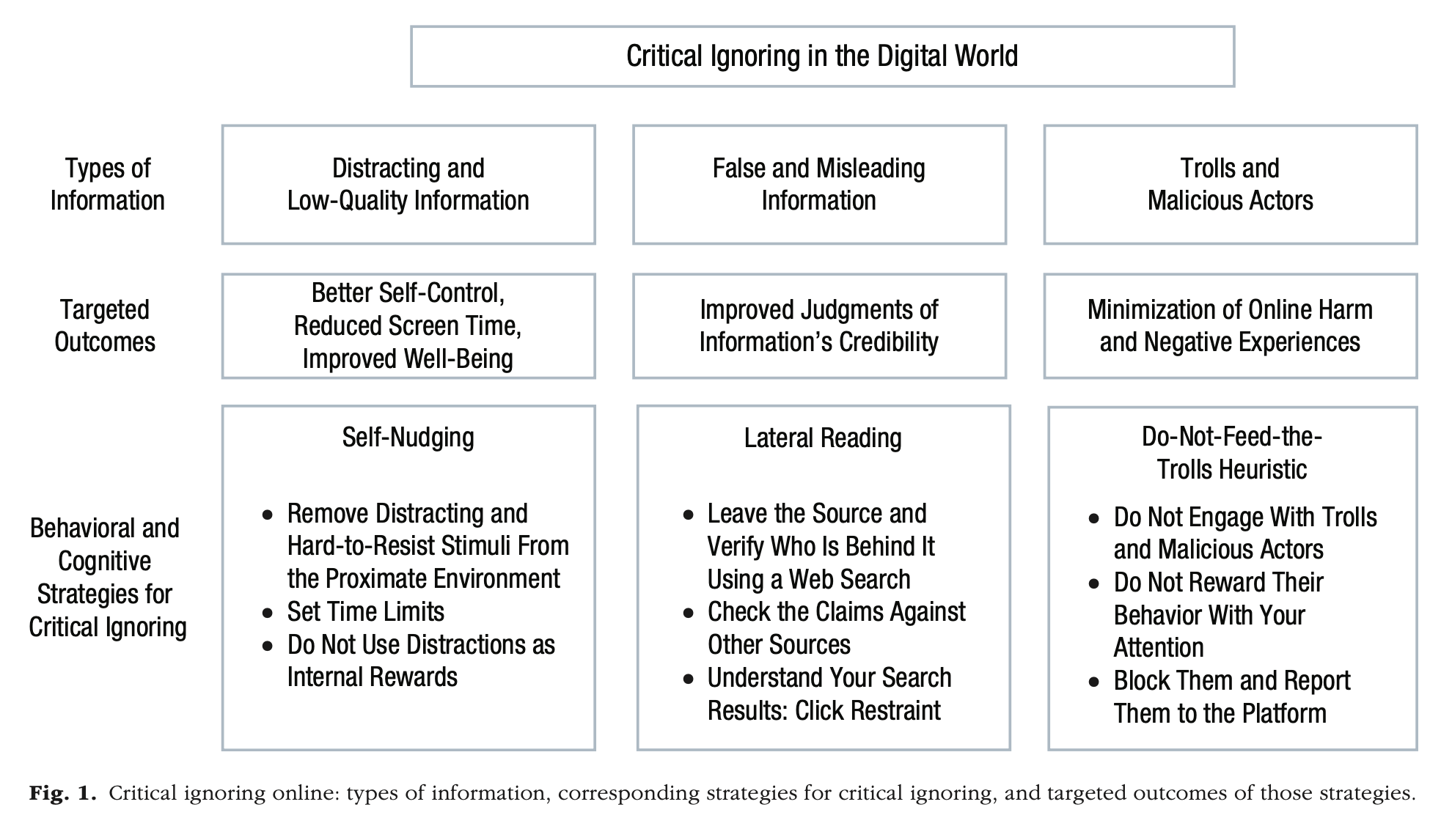

Low-quality and misleading information online can hijack people’s attention, often by evoking curiosity, outrage, or anger. Resisting certain types of information and actors online requires people to adopt new mental habits that help them avoid being tempted by attention-grabbing and potentially harmful content. We argue that digital information literacy must include the competence of critical ignoring — choosing what to ignore and where to invest one’s limited attentional capacities.

We review three types of cognitive strategies for implementing critical ignoring: self-nudging, in which one ignores temptations by removing them from one’s digital environments; lateral reading, in which one vets information by leaving the source and verifying its credibility elsewhere online; and the do-not-feed-the-trolls heuristic, which advises one to not reward malicious actors with attention. We argue that these strategies implementing critical ignoring should be part of school curricula on digital information literacy.

Teaching the competence of critical ignoring requires a paradigm shift in educators’ thinking, from a sole focus on the power and promise of paying close attention to an additional emphasis on the power of ignoring. Encouraging students and other online users to embrace critical ignoring can empower them to shield themselves from the excesses, traps, and information disorders of today’s attention economy.

Ignorance — noun, “lack of knowledge, understanding, or information about something” — is a growing field of study across disciplines. The sociology of knowledge is joined by the sociology of ignorance. Look around long enough and you’ll eventually stumble on your neighborhood agnotologist, a scholar of ignorance. Rather than seeing it as a pure negative — a missing drawer in the cabinet of your brain — more recent work has focused on the benefits, even the necessity of strategic ignorance. There is, after all, too much to know; the choice to learn x is also a choice to ignore y and z. You just have to get good at picking the right x.

Much effort has been invested in repurposing the notion of critical thinking — that is, “thinking that is purposeful, reasoned, and goal directed” — from its origins in education to the online world. For example, Zucker (2019), addressing the National Science Teachers Association, wrote that because of the flood of misinformation “it is imperative that science teachers help students use critical thinking to examine claims they see, hear, or read that are not based on science.”

As important as the ability to think critically continues to be, we argue that it is insufficient to borrow the tools developed for offline environments and apply them to the digital world. When the world comes to people filtered through digital devices, there is no longer a need to decide what information to seek. Instead, the relentless stream of information has turned human attention into a scarce resource to be seized and exploited by advertisers and content providers.

Investing effortful and conscious critical thinking in sources that should have been ignored in the first place means that one’s attention has already been expropriated. Digital literacy and critical thinking should therefore include a focus on the competence of critical ignoring: choosing what to ignore, learning how to resist low-quality and misleading but cognitively attractive information, and deciding where to invest one’s limited attentional capacities.

“Deliberate ignorance” is — spoiler alert! — the phrase academics use to mean “the conscious choice to ignore information even when the costs of obtaining it are negligible.” (Avoiding spoilers is a kind of deliberate ignorance, you see.) This paper’s authors define “critical ignoring” more narrowly, as

…a type of deliberate ignorance that entails selectively filtering and blocking out information in order to control one’s information environment and reduce one’s exposure to false and low-quality information. This competence complements conventional critical-thinking and information-literacy skills, such as finding reliable information online, by specifying how to avoid information that is misleading, distractive, and potentially harmful.

It is only by ignoring the torrent of low-quality information that people can focus on applying critical search skills to the remaining now-manageable pool of potentially relevant information. As do all types of deliberate ignorance, critical ignoring requires cognitive and motivational resources (e.g., impulse control) and, somewhat ironically, knowledge: In order to know what to ignore, a person must first understand and detect the warning signs of low trustworthiness.

Okay, doc — but how do you do that? The authors outline this schema of tactics targeting three different genres of mis-, dis-, or malinformation: distracting/low-quality info, false/misleading info, and everyone’s favorite, trolls.

The first, “self-nudging,” is basically about impulse control — the information equivalent of a diet book that tells you not to leave potato chips sitting around on your kitchen counter, lest you be tempted. Think of it as media mindfulness.

Using extensively studied mechanisms of interventions, such as positional effects (e.g., making healthy food options more accessible in a supermarket or a cafeteria), defaults (e.g., making data privacy a default setting), or social norms, the self-nudger redesigns choice architectures to prompt behavioral change. However, instead of requiring a public choice architect, self-nudging empowers people to change their own environments, thus making them citizen choice architects whose autonomy and agency is preserved and fostered.

To deal with attention-grabbing information online, people can apply self-nudging principles to organize their information environment so as to reduce temptation. For instance, digital self-nudges, such as setting time limits on the use of social media (e.g., via the Screen Time app on iPhone) or converting one’s screen to a grayscale mode, have been demonstrated to help people reduce their screen time.

A more radical self-nudge consists of removing temptations by deactivating the most distracting social-media apps (at least for a period of time). In a study by Allcott et al. (2020), participants who were incentivized to deactivate their Facebook accounts for 1 month gained on average about 60 min per day for offline activities, a gain that was associated with small increases in subjective well-being. Reduced online activity also modestly decreased factual knowledge of political news (but not political participation), as well as political polarization (but not affective polarization).

As this study shows, there are trade-offs between potential gains (e.g., time for offline activities) and losses (e.g., potentially becoming less informed) in such solutions. The key goal of self-nudging, however, is not to optimize information consumption, but rather to offer a range of measures that can help people regain control of their information environments and align those environments with their goals, including goals regarding how to distribute their time and attention among different competing sources (e.g., friends on social media and friends and family offline).

“Lateral reading” is familiar turf to readers of Wineburg’s earlierwork. It’s a spatial metaphor: When confronted with questionable information, don’t focus on digging down deeper into the uncertain source. Instead, move laterally to other sources for confirmation. It breaks down to “open more tabs,” basically, whichisalwaysgoodadvice.

Lateral reading begins with a key insight: One cannot necessarily know how trustworthy a website or a social-media post is by engaging with and critically reflecting on its content. Without relevant background knowledge or reliable indicators of trustworthiness, the best strategy for deciding whether one can believe a source is to look up the author or organization and the claims elsewhere (e.g., using search engines or Wikipedia to get pointers to reliable sources).

The strategy of lateral reading was identified by studying what makes professional fact-checkers more successful in verifying information on the Web compared with other competent adults (undergraduates at an elite university and Ph.D. historians from five different universities). Instead of dwelling on an unfamiliar site (i.e., reading vertically), fact-checkers strategically and deliberately ignored it until they first opened new tabs to search for information about the organization or individual behind it. If lateral reading indicates that the site is untrustworthy, examining it directly would waste precious time and energy.

Although this strategy might require motivation and time to learn and practice, it is a time-saver in the long run. In the study just mentioned, fact-checkers needed only a few seconds to determine trustworthiness of the source.

Finally, there’s the sound advice of not feeding the trolls.

Sometimes it is not the information but the people who produce it who need to be actively ignored. Problematic online behavior, including promulgation of disinformation and harassment, can usually be traced back to real people — more often than not to just a few extremely active individuals. Indeed, close to 65% of antivaccine content posted to Facebook and Twitter in February and March 2021 is attributable to just 12 individuals.

Despite being a minority, conspiracy theorists and science denialists can be vocal enough to cause damage. Their strategy is to consume people’s attention by creating the appearance of a debate where none exists. One productive response is to resist engaging with these individuals or their claims by ignoring them…

…it is important to note that no one can — or should — bear the burden of online abuse and disinformation alone. The do-not-feed-the-trolls heuristic must be complemented by users reporting bad actors to platforms and by platforms implementing consistent content-moderation policies. [Hear that, Elon? —Ed.] It is also crucial to ensure that trolling and flooding tactics of science denialists are not left without response on the platform level. Platforms’ content-moderation policies and design choices should be the first line of defense against harmful online behavior. Strategies and interventions aimed at fostering critical thinking and critical ignoring competencies in online users should not be regarded as a substitute for developing and implementing systemic and infrastructural solutions at the platform and regulator levels.

These three prescriptions may seem less than revolutionary to the savvy internet user. (I think first heard “don’t feed the trolls” on a newsgroup circa 1995.) But they’re worth remembering — and transmitting to the less savvy. You do have some degree of control over the information diet your phone cooks up. You can resist the gravitational pull of a conspiracy black hole by hitting Cmd-T. And the trolls are big enough as it is without you offering up fresh meat. Journalists are in the knowledge creation business — more facts, every day, every hour! Our business model is built around those eyeballs. But from the audience’s point of view, there’s often wisdom in some targeted ignorance.

First published in the Nieman Journalism Lab. You can read the article here.

If you liked what you just read and want more of Our Brew, subscribe to get notified. Just enter your email below.

Related Posts

CIA Agents Successfully Executed a Plan for Regime Change in Iran in 1953 – but Trump Hasn’t Revealed Any Signs of a Plan

Mar 04, 2026

How Mountain Terraces Have Helped Indigenous Peoples Live With Climate Uncertainty

Jan 22, 2026

Filipino Sailors Dock in Mexico … and Help Invent Tequila?

Aug 12, 2025